News

News

Machine Learning for Early Prediction of CognitiveDecline in Alzheimer’s…

Machine Learning for Early Prediction of Cognitive Decline in Alzheimer’s disease

Background: Alzheimer’s disease (AD) is a progressive neurodegenerative disorder characterized by a long preclinical phase during which cognitive impairment develops gradually. Current therapeutic strategies are only able to slow disease progression, making early identification of subjects at risk a crucial challenge. Mild cognitive impairment (MCI) represents an intermediate stage between normal cognition and dementia and is widely recognized as a key target for early diagnosis and preventive intervention. However, early clinical manifestations are often subtle and insufficient for reliable diagnosis. In this context, Machine Learning (ML) techniques, especially when combined with multimodal biomarkers, offer promising tools to improve early prediction of cognitive decline.

Objectives: The main objective of this study is to develop an interpretable Machine Learning model capable of predicting the transition from cognitively normal (CN) status to mild cognitive impairment (MCI) using baseline multimodal biomarkers. By leveraging data from the Alzheimer’s Disease Neuroimaging Initiative (ADNI), the study aims to:

- identify the most informative biomarkers associated with early cognitive decline;

- assess the predictive performance of a Random Forest classifier;

- support early risk stratification in individuals without overt clinical symptoms.

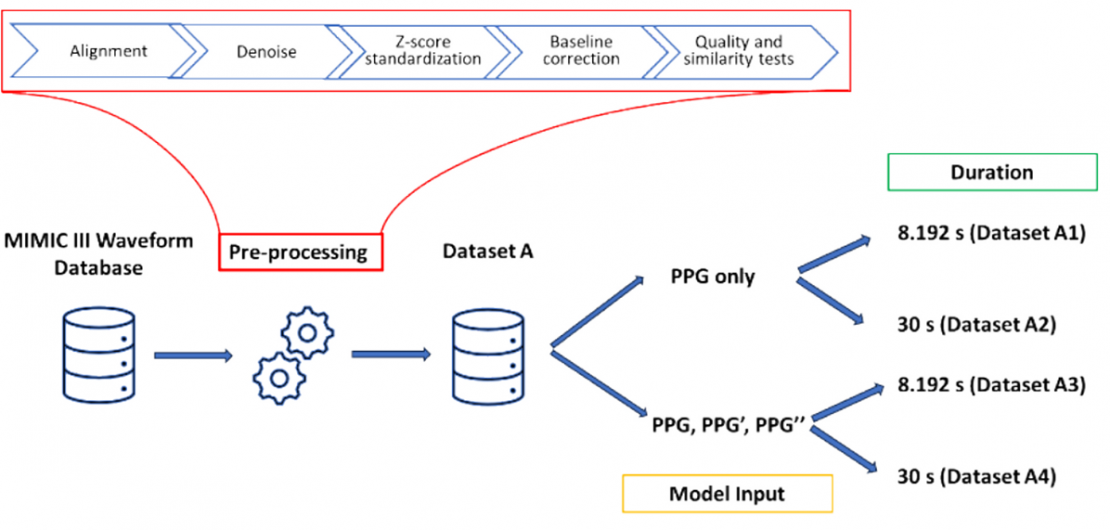

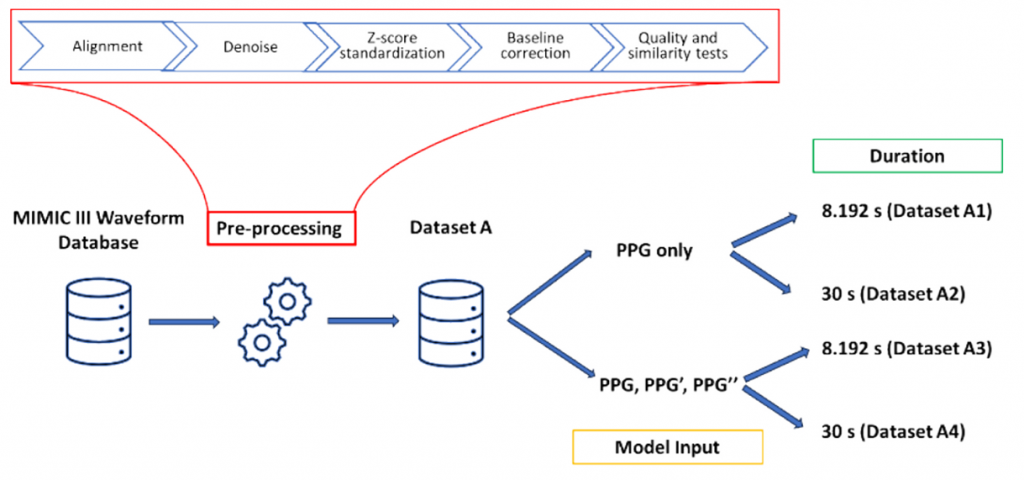

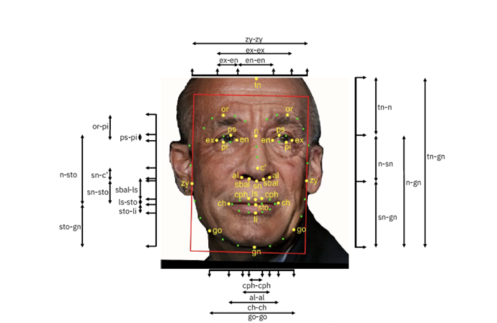

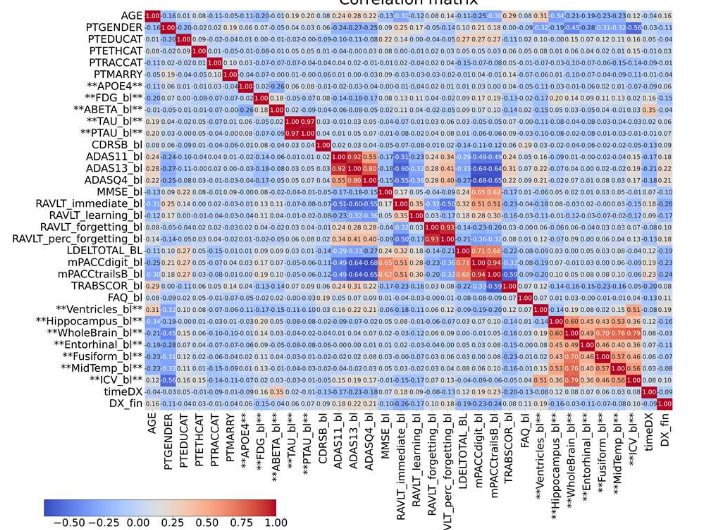

Methods: The study is based on data from the ADNI Merge dataset, which includes longitudinal observations from more than 2,400 subjects characterized by demographic variables, cognitive assessments, cerebrospinal fluid biomarkers, PET imaging, and radiomic features. Only subjects CN at baseline with at least one follow-up visit were considered, focusing on stability versus progression to MCI. A structured preprocessing pipeline was implemented. Age at each visit was reconstructed, categorical variables were encoded, and diagnostic labels were harmonized. Missing values were systematically analyzed to assess data quality, as can be seen in Figure 1.

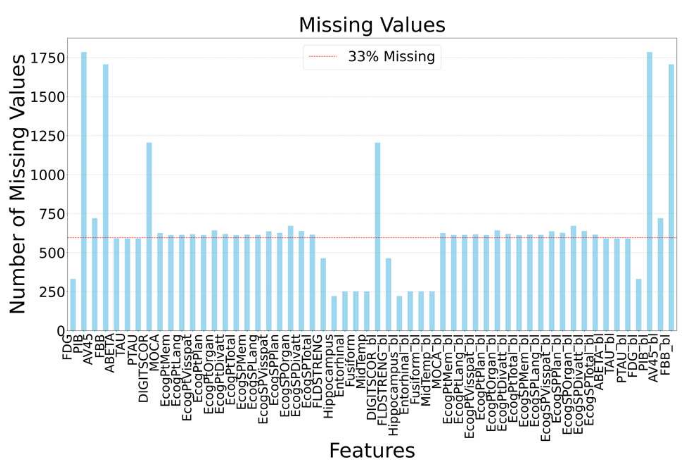

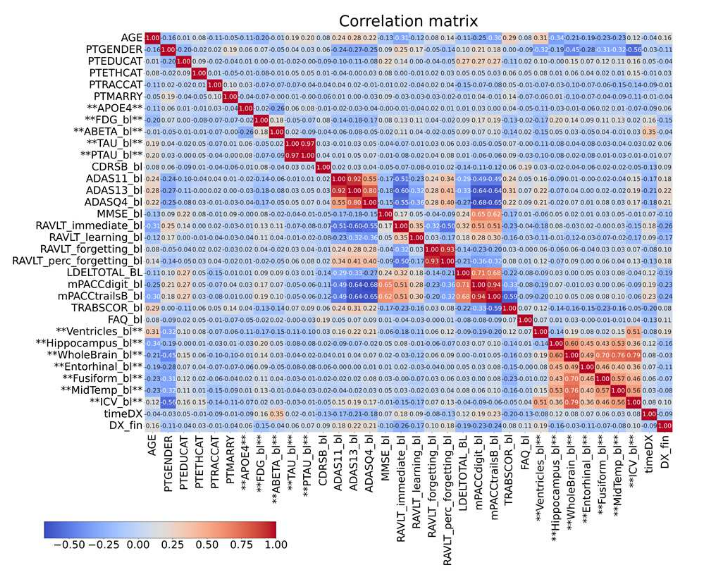

Features with more than 33% missing values were removed, while remaining missing data were imputed using a k-Nearest Neighbors approach. Exploratory analysis was performed through correlation matrices to investigate relationships between biomarkers.

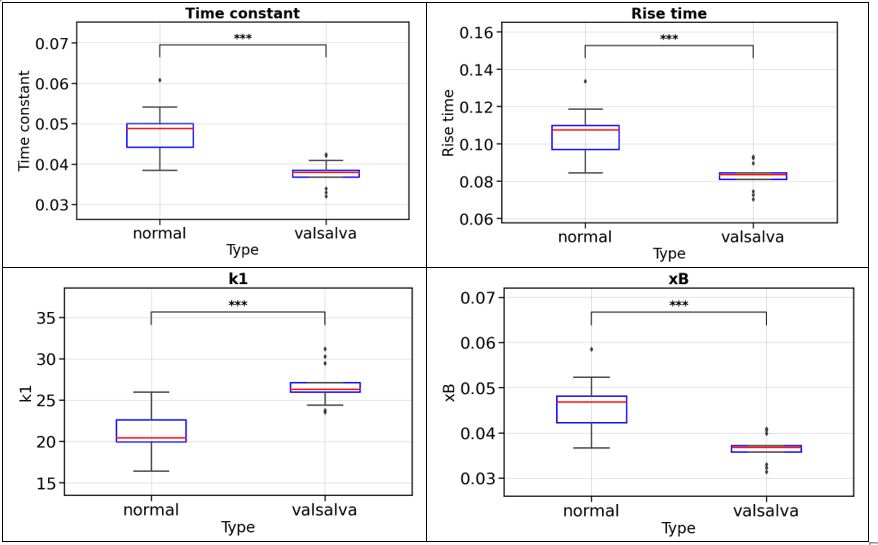

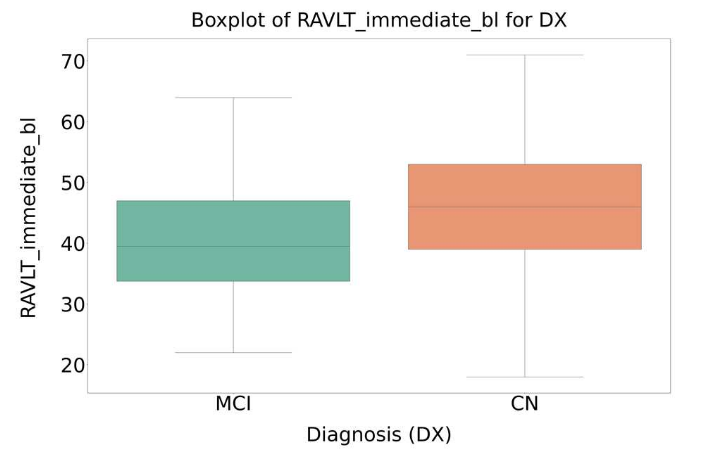

Statistical analysis based on ANOVA was applied to identify variables showing significant differences between CN and MCI groups. An example is shown in Figure 3.

Finally, an interpretable Random Forest classifier was then trained using baseline features, with hyperparameters optimized to balance sensitivity and specificity.

Results: The proposed Random Forest model achieved an overall classification accuracy of 76%, with a sensitivity of 64% and a specificity of 84% in predicting progression from CN to MCI. Exploratory and statistical analyses highlighted that cognitive test scores (e.g., RAVLT, ADAS, mPACC) provided the strongest discriminative power, supported by neurostructural radiomic features such as ventricular and hippocampal volumes.

Conclusions: This study demonstrates the effectiveness of an interpretable Machine Learning approach for the early prediction of cognitive decline in Alzheimer’s disease. By integrating multimodal baseline biomarkers, the proposed Random Forest model provides reliable performance in identifying individuals at risk of progression to MCI. The results support the use of data-driven methods to enhance early diagnosis and risk stratification, contributing to the development of personalized and preventive strategies in the management of neurodegenerative diseases.

References:

[1] L. De Palma, A. Di Nisio, A. M. Lucia Lanzolla, P. Matarrese, E. M. Pich and F. Attivissimo, “Machine Learning for Early Prediction of Cognitive Decline in Alzheimer’s Disease,” 2025 IEEE Medical Measurements & Applications (MeMeA), Chania, Greece, 2025, pp. 1-6, doi: 10.1109/MeMeA65319.2025.11068006.